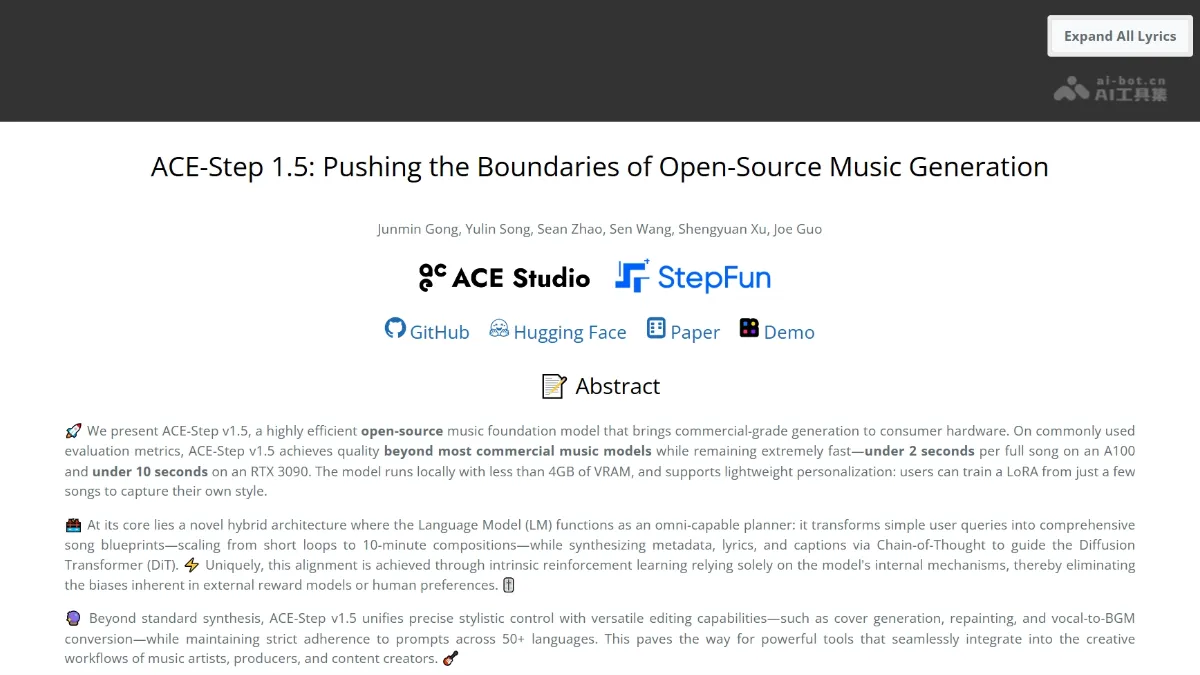

ACE-Step 1.5 - A music generation model open-sourced by ACE Studio and StepFun

ACE-Step 1.5 is an open-source music generation model jointly developed by ACE Studio and StepFun, enabling commercial-grade music generation on consumer-grade hardware. The model employs a hybrid architecture: a language model acts as a planner, transforming user prompts into song blueprints, while a Diffusion Transformer handles acoustic rendering.

ACE-Step 1.5 is an open source jointly launched by ACE Studio and StepFun music generationA basic model that enables commercial-grade music generation on consumer-grade hardware. The model adopts a hybrid architecture, with the language model acting as a planner to convert user prompts into song blueprints, and the Diffusion Transformer responsible for acoustic rendering. Through 4-8 steps of distillation reasoning, it only takes 2 seconds to generate a 4-minute song on A100, about 10 seconds on RTX 3090, and the video memory requirement is less than 4GB. ACE-Step 1.5 supports 50+ languages, precise style control, and editing functions such as cover, redrawing, and vocal conversion to accompaniment. Users can train LoRA with a small number of songs to achieve personalized style transfer.

Main features of ACE-Step 1.5

- music generation : Supports generating complete songs from text prompts, covering lyrics and singing in more than 50 languages, and can flexibly expand music of any length from 10-second short loops to 10-minute long works.

- Edit function : Provides six major editing capabilities: audio redrawing, cover generation, vocal conversion to accompaniment, audio track separation, layered arrangement and continuation and completion, to achieve refined control and re-creation of existing audio.

- style control : It can accurately analyze and execute complex prompt words containing professional music terms, achieving zero-sample sound cloning and strict style adherence.

- personalization : Users only need to provide a few reference songs to quickly train a customized model that captures their unique style through LoRA light fine-tuning.

- efficiency characteristics : The model can be run locally on consumer-grade GPUs with less than 4GB of video memory, achieving sub-second high-speed generation and supporting batch parallel sampling to explore diverse creative candidates.

Technical principles of ACE-Step 1.5

- Hybrid inference-diffusion architecture : ACE-Step 1.5 adopts a dual-component collaboration architecture to decouple music generation into two stages: planning and rendering. The language model (based on Qwen3-0.6B) serves as the “composer agent” and converts user prompts into a YAML format blueprint containing BPM, key, duration, lyrics and acoustic description through thought chain reasoning; the Diffusion Transformer (about 2 billion parameters) serves as the acoustic renderer, receiving standardized conditions and focusing on generating high-fidelity audio. This division of labor frees DiT from the burden of semantic understanding, while LM’s multi-task training ensures robust alignment across more than 50 languages.

- Efficient reasoning optimization : In order to achieve real-time generation on consumer-grade hardware, the team launched anti-dynamic offset distillation technology. Based on Decoupled DMD2, the GAN target and latent space discriminator are introduced. By randomly sampling offset parameters from {1, 2, 3}, the model is exposed to diversified denoising states and avoids overfitting caused by fixed step sizes. This solution compresses the number of inference steps from 50 to 4-8. It only takes about 1 second to generate a 240-second audio track on the A100, achieving a 200-fold acceleration. The adversarial feedback helps the student model surpass the teacher’s sound quality performance.

- intrinsic reinforcement learning alignment : The system establishes a unified intrinsic reinforcement learning framework to avoid external bias. For DiT, the attention alignment score (AAS) is proposed as an intrinsic reward, and dynamic time warping is used to measure lyric token coverage, attention monotonicity and path confidence. After optimization, the correlation between lyrics-audio synchronization and human judgment exceeds 95%. For LM, the GRPO algorithm is used to build a reward model using point mutual information. The LM is regarded as the dual role of “composer” and “listener”. PMI punishes general descriptions and rewards specific annotations. The final reward is dynamically weighted according to 50% style atmosphere, 30% lyric content, and 20% metadata constraints.

- Unified mask generation framework : Constructing a flexible mask generation paradigm by discretizing continuous audio latent variables into 5Hz codebook representations through finite scalar quantization (FSQ). Manipulating source latent variables and mask configuration, a single model can support six modalities: text-to-music, cover, redraw, track extraction, cascading, and completion. FSQ uses attention pooling to compress the 25Hz latent space into structured source latent variables, which are spliced with noise targets and masks and then processed by the patchify layer. The unified representation simplifies multi-task training and ensures high-fidelity maintenance of melody and rhythm elements during the conversion process by quantizing latent variables.

ACE-Step 1.5 project address

- Project official website :https://ace-step.github.io/ace-step-v1.5.github.io/

- GitHub repository :https://github.com/ace-step/ACE-Step-1.5

- arXiv technical papers :https://arxiv.org/pdf/2602.00744

- Experience Demo online :https://huggingface.co/spaces/ACE-Step/Ace-Step-v1.5

Application scenarios of ACE-Step 1.5

- Music Creation and Production : Musicians and producers can use ACE-Step 1.5 as an inspiration generation tool to quickly convert text descriptions into complete song drafts, breaking through creative bottlenecks.

- Personalized content creation : Content creators can fine-tune and train personal style models through LoRA to batch generate customized background music for videos, podcasts, games and other projects, maintaining timbre consistency across works.

- Multilingual music production : The model supports accurate lyrics generation and singing in more than 50 languages, and is suitable for global music distribution, cross-cultural cooperation projects, and content production in minority language music markets.

- Education and Learning : Music learners can observe the model generation results and intuitively understand music theory concepts by inputting professional terms (such as specific modes, chord progressions). ©