GLM-5 - Zhipu Open Source's next-generation flagship model

GLM-5 is the next-generation flagship model open-sourced by Zhipu AI. The parameter size has been expanded from 355B in GLM-4.5 to 744B (40B activation), and the pre-training data reaches 28.5T tokens. The model is the mysterious "Pony Alpha" model that topped the OpenRouter popularity chart.

GLM-5 is a new generation flagship model of Zhipu AI open source. The parameter scale ranges from GLM-4.5The 355B is expanded to 744B (40B activation), and the pre-training data reaches 28.5T tokens. The model is the previous OpenRouterThe mysterious model “Pony Alpha” topped the list of popularity. The model is specially designed for complex system engineering and long-range Agent tasks. It integrates DeepSeek Sparse Attention to reduce deployment costs, and self-developed slime asynchronous RL infrastructure improves training efficiency. In the Artificial Analysis list, GLM-5 ranks fourth in the world and first in open source. The model supports generating Office documents and is compatible with Claude Codeand other tools, while supporting the deployment of domestic chips such as Huawei Ascend, Moore Thread, and Cambrian. The model is available at z.aiofficial website, BigModel.cnThe platform provides experience and the API is now open simultaneously.

Main functions of GLM-5

- complex systems engineering : The model can handle multi-level technical tasks such as front-end development and back-end architecture design, and supports full-process engineering delivery from demand analysis to code implementation.

- Long-range Agent tasks : Possess long-term planning and resource management capabilities, and can make independent decisions and achieve goals in simulated business scenarios that require continuous operation for one year, such as Vending Bench 2.

- Intelligent document generation : Supports the direct conversion of text or original materials into .docx, .pdf, .xlsx and other formats, and outputs PRD, financial reports, lesson plans and other professional documents that can be directly used.

- Multi-tool collaboration : Compatible with Claude Code, OpenClawand other mainstream development tool chains to achieve automated operations and collaboration across applications.

Technical principles of GLM-5

- Large scale pre-training extension : The model parameters have been expanded from 355B (32B activated) to 744B (40B activated), and the pre-training data has been increased from 23T to 28.5T, using more computing power to improve the general smart base.

- Asynchronous reinforcement learning infrastructure “Slime” : The self-developed asynchronous RL training framework solves the efficiency bottleneck of large-scale language model reinforcement learning. This infrastructure supports parallelized reward calculations and policy updates, enabling more fine-grained post-training iterations, effectively narrowing the gap between pre-training capabilities and actual performance.

- **sparse attention mechanism : DeepSeek Sparse Attention is integrated for the first time, which greatly reduces the token consumption and deployment costs of Agent scenarios while maintaining the lossless effect of long text.

- Deep adaptation of domestic computing power** : Completed the underlying operator optimization and hardware acceleration with domestic chips such as Huawei Ascend, Moore Thread, Cambrian, Kunlun Core, Pingtouge, and Muxi to achieve high throughput and low-latency inference.

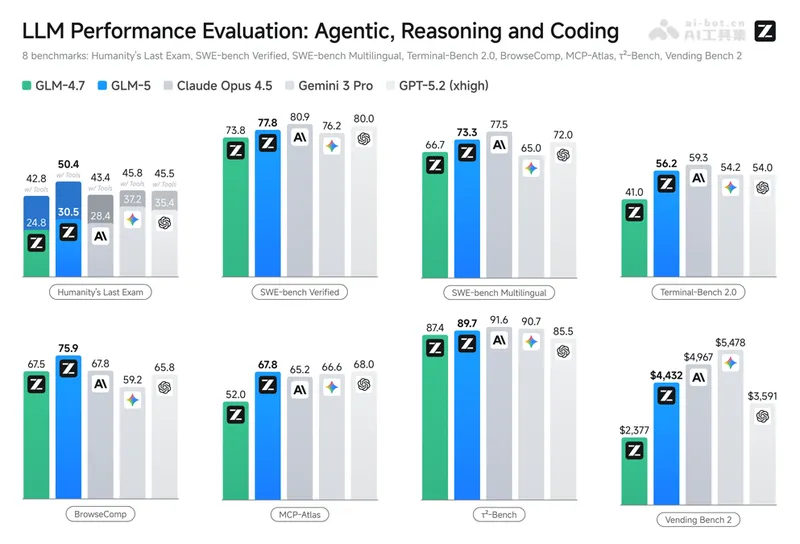

GLM-5 performance

-

**reasoning ability

-

AIME 2026 I reaches 92.7%, which is the same as DeepSeek-V3.2Flat, HMMT Nov. 2025 leads most competing products with 96.9%.

-

The GPQA-Diamond expert-level reasoning test achieved 86.0%, and the IMOAnswerBench achieved 82.5%. Programming ability

-

The SWE-bench Verified real software engineering test achieved 77.8%, and the multi-language version achieved 73.3%, both of which were approximately 4 percentage points higher than GLM-4.7.

-

The Terminal-Bench 2.0 terminal operation benchmark reached 56.2%, and it improved to 61.1% in the Claude Code environment, far exceeding GLM-4.7.

-

CyberGym’s network security test score was 43.2%, nearly double that of GLM-4.7’s 23.5%, demonstrating the attack and defense capabilities of complex systems. Agent and tool usage

-

BrowseComp web browsing task 62.0%, combined with the context management strategy can be increased to 75.9%, surpassing Kimi K2.5.

-

τ²-Bench multi-domain tool calls reached 89.7%, MCP-Atlas public set 67.8%, Tool-Decathlon 38.0%.

-

Comprehensive ranking** :Artificial Analysis authoritative list: fourth in the world and first in open source.

How to use GLM-5

- Online experience : visit z.aiOn the official website, you can manually select the GLM-5 model to try Chat mode or Agent mode for free. The latter supports multi-tool collaboration and document generation. Pass BigModel.cnPlatform or Z.ai API service access, compatible with OpenAI format interface.

- local deployment Download BF16/FP8 weights from HuggingFace, run using vLLM, SGLang or xLLM framework, supporting 8-card parallel inference.

- Non-NVIDIA environments can be deployed through domestic chips such as Huawei Ascend and Moore Thread, and the official provides targeted optimization solutions. Development tool integration

- Users who subscribe to the GLM Coding Plan can activate it directly or via Z CodeVisual environment remotely controls multi-Agent collaboration.

GLM-5 project address

- Project official website :https://z.ai/blog/glm-5

- GitHub repository :https://github.com/zai-org/GLM-5

- HuggingFace model library :https://huggingface.co/zai-org/GLM-5

Application scenarios of GLM-5

- complex systems engineering : Support end-to-end delivery of large-scale projects, and independently complete the entire process of requirement disassembly, architecture design, code implementation and deployment.

- Legacy system refactoring : The model can deeply understand the existing code base and perform back-end architecture optimization and modernization.

- Deep debugging and fixing : The model can analyze logs, locate root causes, and iteratively fix stubborn bugs until the system runs stably.

- Intelligent Assistant : The model can automatically perform scheduled tasks such as search, sorting, and publishing 24/7, becoming the user’s digital intern.

- Business decision optimization : Demonstrate long-term planning and resource management capabilities in simulated business scenarios to achieve intelligent strategy formulation. ©