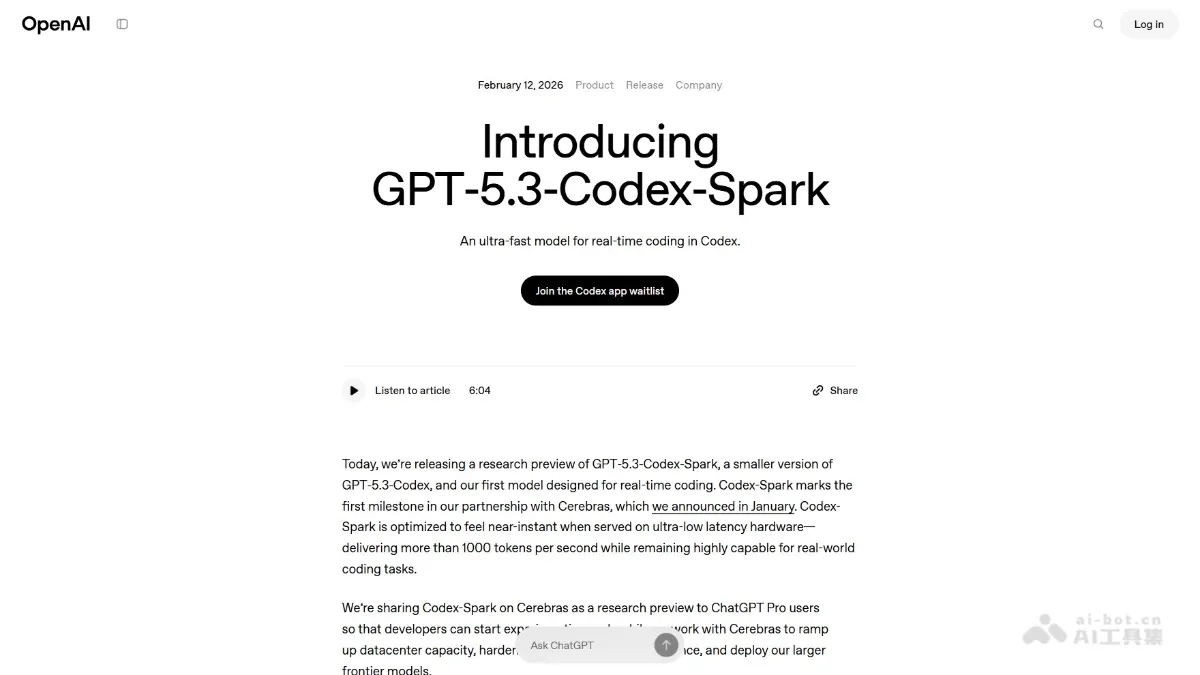

GPT-5.3-Codex-Spark - A lightweight programming model and AI toolset from OpenAI

GPT-5.3-Codex-Spark is OpenAI's first lightweight model designed specifically for real-time programming, emphasizing extreme speed. The model runs on a Cerebras WSE-3 wafer-level chip, achieving inference speeds exceeding 1000 tokens/second and supporting 128k context.

GPT-5.3-Codex-Spark is OpenAI’s first dedicated real-time ProgrammingA lightweight model designed for ultimate speed. The model runs on the Cerebras WSE-3 wafer-level chip, with an inference speed of over 1000 tokens/second and supports 128k context. Unlike Codex, which is good at long-term autonomous tasks, GPT-5.3-Codex-Spark specializes in real-time collaboration scenarios. It can interrupt and correct while outputting, making coding interaction more “handy”. OpenAI reconstructed the underlying reasoning stack to reduce latency by 80%. Codex-Spark has been rolled out to ChatGPT Pro users as a research preview, available in the latest version of Codex Used in apps, CLI, and VS Code extensions.

Key features of GPT‑5.3‑Codex‑Spark

- Live coding collaboration : The model supports developers to interrupt, correct or redirect while observing the model output, achieving a “follow-the-hand” real-time interactive experience.

- Ultra-high-speed reasoning : Supports running on Cerebras WSE-3 wafer-level chip, with inference speed exceeding 1000 tokens/second, and is optimized for ultra-low latency scenarios.

- Precise code editing : The model adopts a lightweight working style by default, making only minimal and targeted code modifications to quickly adjust logic, interfaces or structures.

- Low latency architecture optimization : By introducing persistent WebSocket connections, rewriting the inference stack and optimizing the Responses API, the client/server round trip overhead is reduced by 80%, the cost per token is reduced by 30%, and the first token time is shortened by 50%.

- Large context processing : Supports 128k context windows, enabling real-time analysis and modification of large code bases.

- Dual mode collaboration : As OpenAI’s first real-time coding model, it will be integrated with the Codex standard version of long-term reasoning in the future to achieve parallelization of real-time interaction and background time-consuming tasks, and automatically balance interaction speed and task breadth.

- Multi-platform access : Integrated into Codex applications, CLI command line tools and VS Code extensions to facilitate developers to use it in different scenarios.

Technical principles of GPT‑5.3‑Codex‑Spark

- Dedicated AI accelerator architecture : Supports running on the Cerebras Wafer Scale Engine 3 (WSE-3) wafer-level engine. It is an AI accelerator designed for high-throughput, low-latency inference. It achieves ultimate parallel computing capabilities through full wafer-level integration.

- Model lightweight design :as GPT-5.3-CodexThe distilled version adopts a smaller parameter scale, which greatly reduces the computational load while maintaining the core coding capabilities, achieving a balance between speed and performance.

- End-to-end latency optimization : Reconstruct the complete request-response link and introduce persistent WebSocket connections to replace traditional HTTP polling to reduce connection establishment overhead; rewrite key reasoning stack components to optimize token generation and transmission efficiency; improve the session initialization mechanism and shorten the first token waiting time.

- Streaming response mechanism : Optimize the response streaming from the server to the client, so that the token can be pushed in real time, and it can be used with incremental rendering to achieve instant visual feedback.

- Targeted fine-tuning strategy : Specialized training for real-time interaction scenarios, strengthening the processing efficiency of short-cycle tasks such as partial code editing and rapid logic adjustment, and weakening the tendency of long-chain autonomous execution.

GPT‑5.3‑Codex‑Spark project address

- Project official website :https://openai.com/index/introducing-gpt-5-3-codex-spark/

Application scenarios of GPT‑5.3‑Codex‑Spark

- On-the-fly code debugging : After discovering a bug, developers can immediately call Spark to quickly locate and fix it. There is no need to wait for the model to think for a long time, and the modification effect can be verified while interacting.

- Rapid interface iteration : In UI/UX development, styles, layouts or interaction logic can be frequently adjusted to shorten the design-feedback closed loop.

- Code review and optimization : The model can review existing code line by line. Users can immediately obtain improvement suggestions and apply targeted refactorings, maintaining full control of the modification process.

- **Learn to explore programming : When programming beginners or researching new libraries, they can explore API usage and understand code logic through real-time conversations, and the model responds immediately to reduce cognitive interruptions.

- Rapid prototype verification** : Quickly build MVP in the early stages of the product. Users can describe the requirements while watching code generation, accelerating the transformation from concept to runnable code. ©