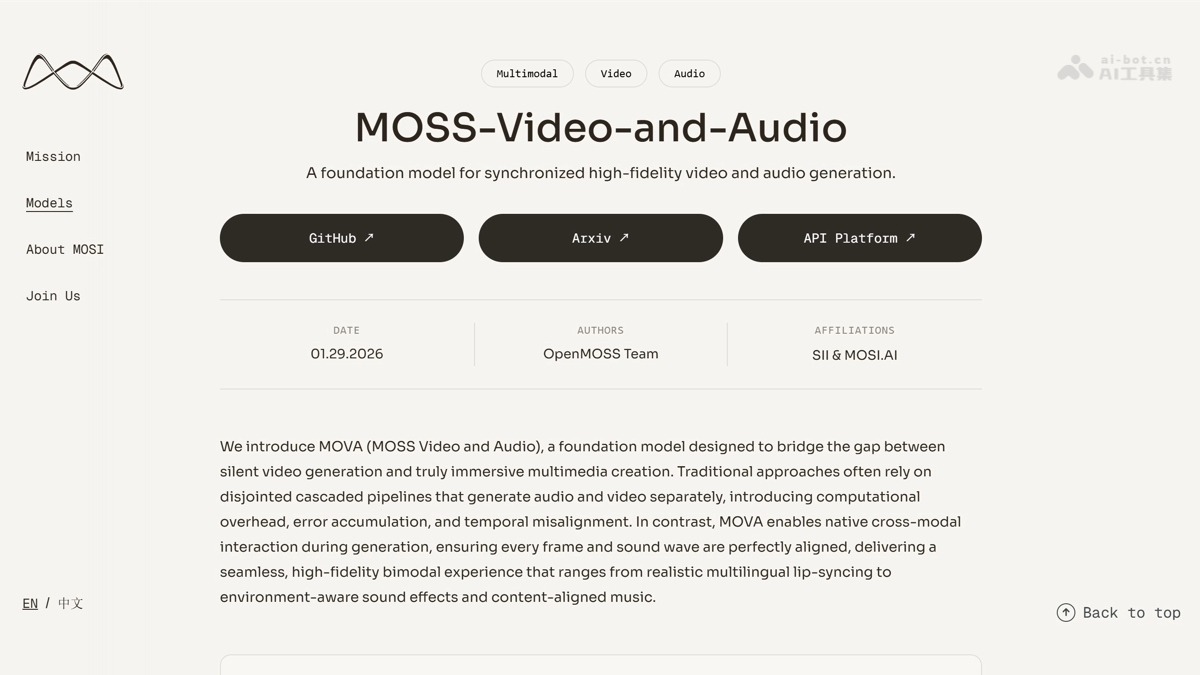

InternVL-U - An open-source multimodal integrated model from Shanghai AI Lab and other sources

InternVL-U is a lightweight, unified multimodal model with 4B parameters, open-sourced by the Shanghai Artificial Intelligence Laboratory in collaboration with several top universities. It achieves an end-to-end closed loop of "understanding-reasoning-generation-editing" for the first time. The model employs three core designs: unified contextual modeling, modality-specific modularization, and decoupled visual representation, overcoming the bottlenecks of high training costs and uneven capabilities in traditional models. The model surpasses 14B-level models in complex scenarios such as text rendering, scientific reasoning, and spatial modeling. Its GenExam benchmark score of 22.9 for scientific image generation leads all open-source unified models, providing a significant advantage for scenarios such as scientific research and education, intelligent office work, and creative content creation.

InternVL-U is a 4B parameter lightweight unified multi-modal model that is open sourced by Shanghai Artificial Intelligence Laboratory and many top universities. It realizes the end-to-end closed loop of “understanding-reasoning-generation-editing” for the first time. The model adopts three core designs of “unified context modeling + modality-specific modularization + decoupled visual representation” to break through the bottlenecks of traditional model training costs and uneven capabilities. The model surpasses 14B-level models in complex scenarios such as text rendering, scientific reasoning, and spatial modeling. The GenExam scientific research image generation benchmark score of 22.9 leads all open source unified models, providing efficient and flexible multi-modal solutions for scientific research and education, smart office, creative content and other scenarios.

Main functions of InternVL-U

- multimodal understanding : Supports accurate analysis of visual information in images and answers various complex questions raised by users.

- logical reasoning : The model uses thinking chain technology to decompose abstract natural language instructions into executable specific operation steps.

- image generation : Generate high-fidelity, semantically accurate and aesthetically compliant visual images based on text descriptions.

- image editing : Precisely modify the content of the specified area of the image while retaining the original background texture and lighting effects.

- text rendering : The model can accurately generate Chinese, English, numbers and mathematical symbols, completely eliminating glyph distortion and spelling errors.

- scientific visualization : Supports the drawing of molecular structures, algorithm flow charts and other professional scientific research illustrations that comply with discipline specifications.

- spatial modeling : The model can complete three-dimensional geometric operations, CAD multi-view conversion and rotation operations of three-dimensional objects at any angle.

- Interesting creation : InternVL-U can quickly generate interesting and creative content suitable for network communication scenarios such as emoticons and memes.

Technical principles of InternVL-U

- Decoupling visual representations : InternVL-U adopts an asymmetric visual representation strategy. In the understanding task, it uses pre-trained ViT to extract high semantic features to ensure the accuracy of complex scene understanding. In the generation task, the image is compressed into latent space through independent VAE to retain pixel-level details. The model avoids optimization conflicts between semantic understanding and image reconstruction, allowing the model to maintain leading performance in both understanding and generation benchmarks.

- Dual-stream MMDiT generation header : The visual generation head uses a dual-stream structure to process multi-modal context features and image latent features respectively, adjusts weights through a sigmoid gated attention mechanism to alleviate performance degradation in long context scenarios, uses unified MSRoPE three-dimensional position coding to ensure accurate retention of spatial structure, and supports multi-resolution generation from 512 to 1024 pixels to avoid splicing artifacts at high resolutions.

- Level 3 Progressive Training : The model adopts a three-level strategy of pre-training, continuous pre-training and fine-tuning. In the first stage, the frozen backbone network trains the generation head to activate multi-modal context condition understanding capabilities. In the second stage, the fixed backbone network trains multi-resolution generation capabilities and selects high-aesthetic samples. In the third stage, the full model is unfrozen and integrated into the thinking chain data to achieve deep collaboration of understanding, reasoning and generation.

InternVL-U project address

- GitHub repository :https://github.com/OpenGVLab/InternVL-U

- HuggingFace model library :https://huggingface.co/InternVL-U/InternVL-U

- arXiv technical papers :https://arxiv.org/pdf/2603.09877

Application scenarios of InternVL-U

- Scientific research and education : Provide scientific researchers and students with professional visual content such as molecular structures, algorithm flow charts, force analysis diagrams, etc., to assist in teaching demonstrations and thesis illustration production.

- Smart office : Realize automatic document generation, batch editing of posters, and simultaneous modification of multi-region text to improve the production efficiency of business documents and marketing materials.

- creative design : Support designers to quickly generate high-fidelity concept drawings, stylized images and multi-resolution visual materials, lowering the threshold for professional design.

- Content operation : Help new media operators generate interesting content such as emoticons and memes with one click, adapting to social media communication scenarios.

- Industrial manufacturing : The model can complete CAD multi-view conversion, three-dimensional geometric operations and three-dimensional object rotation, assisting engineering design and product prototype visualization. ©