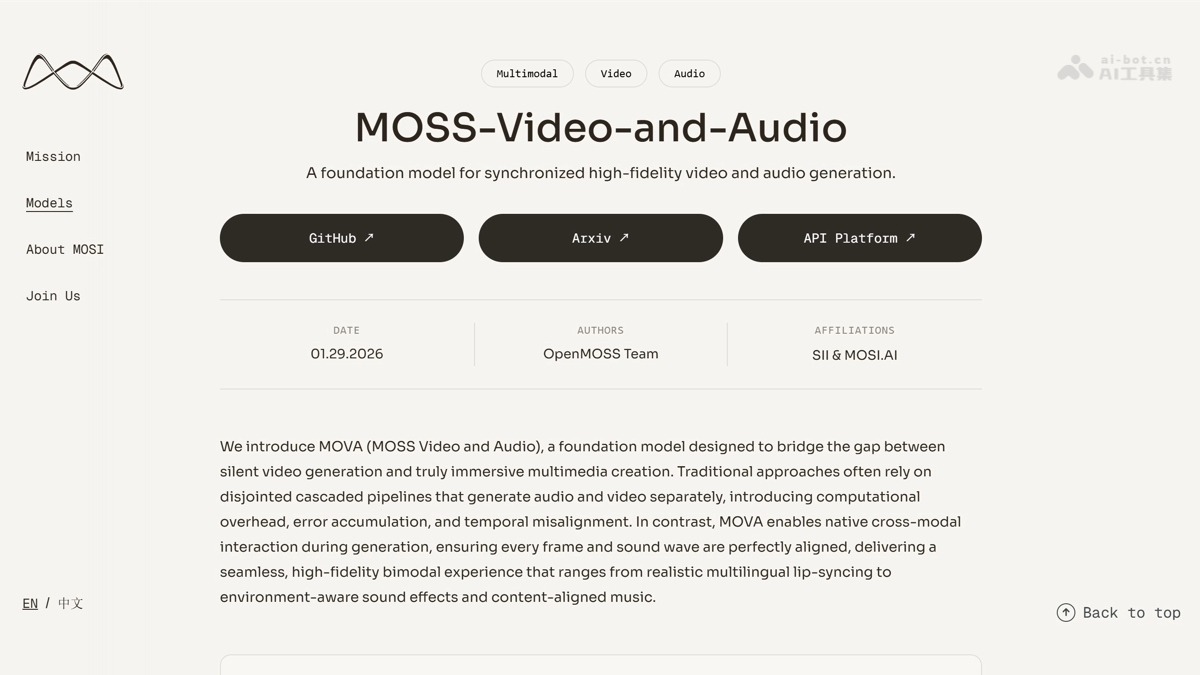

MOVA - An end-to-end audio and video model | AI toolset open-sourced by Innovation Academy and Mosin Intelligence.

MOVA (MOSS Video and Audio) is China's first high-performance open-source end-to-end audio and video generation model, jointly developed by the OpenMOSS team at Shanghai Institute of Innovation and Motion Intelligence (MOSI). Breaking through the limitations of traditional "silent" video generation, the model employs a heterogeneous dual-tower architecture and bidirectional bridging modules to achieve native cross-modal interaction. The model boasts 32 billion parameters (MoE architecture, 18 billion inference activations) and can simultaneously generate up to 8 seconds of 720p resolution video and accompanying audio, demonstrating outstanding performance in cinematic lip-sync and environmental sound effect fit. MOVA's main functions...

MOVA (MOSS Video and Audio) is jointly launched by the OpenMOSS team of Shanghai Chuangzhi University and MOSI. It is China’s first high-performance open source audio and video end-to-end generation model. The model breaks through the limitations of traditional video “mute” and adopts a heterogeneous twin-tower architecture and a two-way bridge module to achieve native cross-modal interaction. The model has 32 billion parameters (MoE architecture, 18 billion inference activations), can simultaneously generate up to 8 seconds of 720p resolution video and supporting audio, and performs excellently in movie-level lip synchronization and environmental sound effect compatibility.

Main functions of MOVA

- End-to-end audio and video generation : The model can simultaneously output video and supporting audio at one time, saying goodbye to “dumb video”.

- Dual mode driver generation : Supports image + text or plain text input, and flexibly controls the generated content.

- Cinematic lip sync : The model can accurately match the character’s mouth shape and voice when speaking, and supports multi-character dialogue in Chinese and English.

- Intelligent ambient sound effects : Automatically synthesize matching background music, action sounds and environmental sounds based on the scene.

- Video text rendering : The model can generate clear and readable dynamic text content at designated positions on the screen.

- High resolution output : The model supports the generation of audio-visual clips with a maximum resolution of 720p and a duration of 8 seconds.

Technical principles of MOVA

- Heterogeneous twin-tower architecture : The model uses the 14B video diffusion model and the 1.3B audio diffusion model to process visual and auditory information respectively, and realizes the deep cross-attention fusion of two layers of hidden states through the two-way bridging module, allowing the whole process of picture generation to perceive the sound rhythm.

- Cross-modal time alignment : There is a huge difference in sampling density between video and audio. The Aligned ROPE mechanism unifies the tokens of the two modes into the same physical time coordinate system through precise scaling mapping, fundamentally eliminating the problem of audio and video desynchronization.

- Progressive training strategy : The model is trained in three stages from coarse to fine. It first uses 360p low resolution to allow the randomly initialized bridge module to quickly learn audio and video alignment, gradually improves the alignment stability, and finally expands to 720p high resolution for image quality refinement.

- Dual CFG reasoning : In view of the characteristics of two control sources for joint audio and video generation, text instructions and modal bridging, it supports independent adjustment of the guidance weights of the two, maintaining picture quality in general scenarios and enhancing mouth shape accuracy in dialogue scenarios.

MOVA’s project address

- Project official website :https://mosi.cn/models/mova

- GitHub repository :https://github.com/OpenMOSS/MOVA

- HuggingFace model library :https://huggingface.co/collections/OpenMOSS-Team/mova

MOVA application scenarios

- Film and television production : Quickly generate storyboard previews and dubbing samples, reducing pre-production costs and accelerating creative verification.

- Short video creation : Provide creators with high-quality plot materials with sound effects, improve production efficiency, and enrich content forms.

- game development : Automatically generate cutscenes and character dialogues to achieve an immersive experience with synchronized audio and video, shortening the development cycle.

- Education and training : Produce multi-language instructional videos with accurate mouth shapes, support global content adaptation, and improve learning effects.

- E-commerce marketing : Produce product display videos with explanations and background music to accelerate marketing content iteration and enhance conversion capabilities.