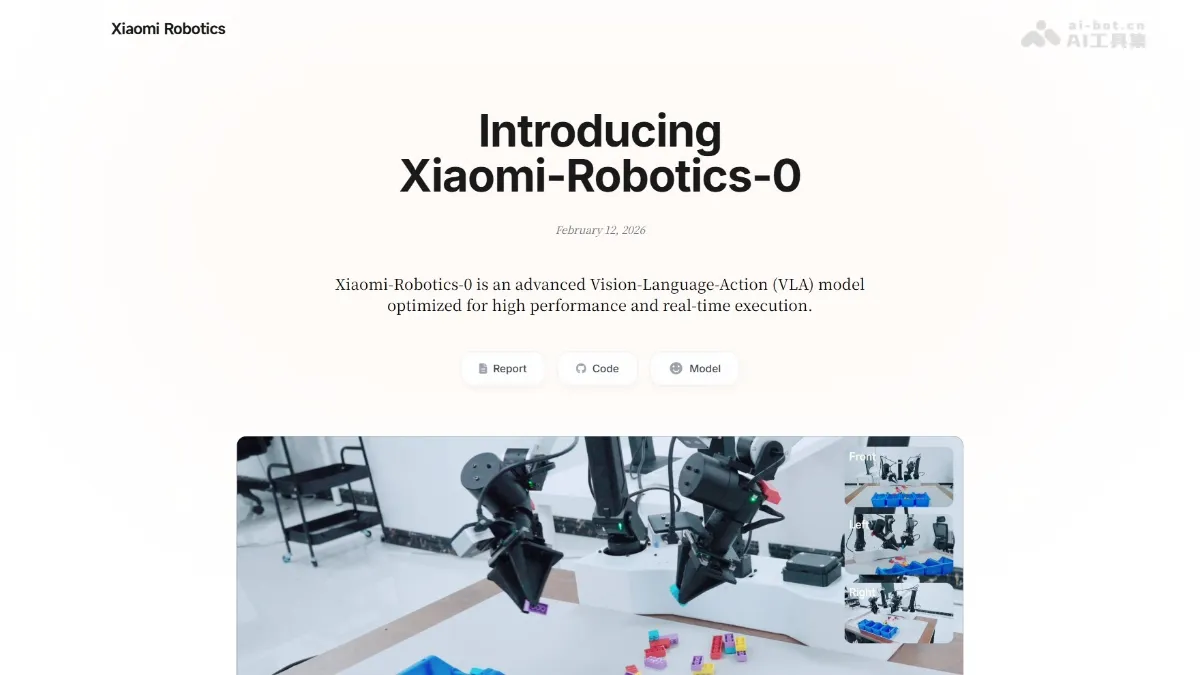

Xiaomi-Robotics-0 - Xiaomi's open-source VLA robot model

Xiaomi-Robotics-0 is Xiaomi's first open-source VLA (Vision-Language-Motion) robot model, boasting 4.7 billion parameters. It employs a MoT hybrid architecture, with the Qwen3-VL multimodal model acting as the "brain" to understand visual and language commands. Diffusion...

Xiaomi-Robotics-0 is Xiaomi’s first-generation open-source robot VLA (Vision-Language-Action) large model. It has 4.7 billion parameters and adopts MoT hybrid architecture. Qwen3-VLThe multi-modal model serves as the “brain” to understand visual language instructions, and the Diffusion Transformer serves as the “cerebellum” to generate high-frequency action blocks. Innovatively introduces asynchronous execution and Λ-shape attention mask to solve the action lag caused by reasoning delay and achieve real-time smooth control on consumer-grade graphics cards. It has refreshed SOTA in simulation benchmark tests such as LIBERO and CALVIN, and has been successfully applied to real machine dual-arm operation tasks such as building block disassembly and towel folding.

Main features of Xiaomi-Robotics-0

- natural language understanding : The model can parse human fuzzy instructions and identify spatial relationships and operational intentions from visual input.

- Action generation control : The model can output high-frequency and smooth action sequences to drive the robot to complete precise physical operations.

- Real-time asynchronous execution : Supports parallel reasoning and execution, eliminates delays and lags, and ensures coherent and smooth actions.

- Cooperative operation of both arms : Supports the cooperation of both hands to complete complex long-term tasks such as dismantling building blocks and folding towels.

- Adaptive strategy adjustment : The model can automatically switch action strategies to respond flexibly when the capture fails or the environment changes.

- Multimodal capability retention : The model retains general understanding capabilities such as visual question answering and object detection to prevent catastrophic forgetting.

Technical principles of Xiaomi-Robotics-0

- MoT hybrid architecture : The Qwen3-VL-4B multi-modal model is used as the “brain” to process visual language input, and the Diffusion Transformer as the “cerebellum” is responsible for action generation. The total number of parameters is 4.7 billion, taking into account both general understanding and fine control.

- two-stage training : The first stage uses the Action Proposal mechanism to let VLM learn the action distribution to align the feature space, and mix visual language and robot data to prevent forgetting; the second stage freezes the VLM and specializes in training DiT to recover precise action sequences from noise through flow matching.

- Asynchronous execution mechanism : The robot executes the current action block while reasoning about the next block in parallel. Use Clean Action Prefix to use the action at the previous moment as the input condition to ensure that the trajectory sequence is continuous and mechanically eliminate action gaps caused by reasoning delays.

- Λ-shape attention mask : Replaces DiT’s causal attention mask, supports noise tokens immediately adjacent to the prefix to pay attention to historical actions to achieve smooth transition, and prohibits subsequent tokens from accessing the prefix, forcing them to pay attention to visual signals, preventing the model from copying inertial actions, and improving the response sensitivity to sudden changes in the environment.

Xiaomi-Robotics-0 project address

- Project official website :https://xiaomi-robotics-0.github.io/

- GitHub repository :https://github.com/XiaomiRobotics/Xiaomi-Robotics-0

- HuggingFace model library :https://huggingface.co/collections/XiaomiRobotics/xiaomi-robotics-0

- technical paper :https://xiaomi-robotics-0.github.io/assets/paper.pdf

Application scenarios of Xiaomi-Robotics-0

- Industrial precision assembly : The model can accurately disassemble complex assemblies composed of up to 20 building blocks, and is suitable for precision assembly scenarios such as electronic products and automobile parts.

- Home service cleaning : The model can actively swing towels to expose covered corners, identify excess items and put them back, which is suitable for housework assistance and elderly care scenarios.

- Logistics warehousing sorting : The model relies on its high-frequency and smooth motion generation capabilities to adapt to the diverse product processing needs of different shapes and materials.

- Scientific research, education and development : The model supports universities and research institutions to carry out embodied intelligence algorithm research, robot control strategy development and teaching demonstrations.

- Business interactive display : The model can be deployed in exhibition halls, stores, press conferences and other scenarios to demonstrate low-latency, high-fluency human-machine collaboration capabilities and enhance the brand’s technical image. ©