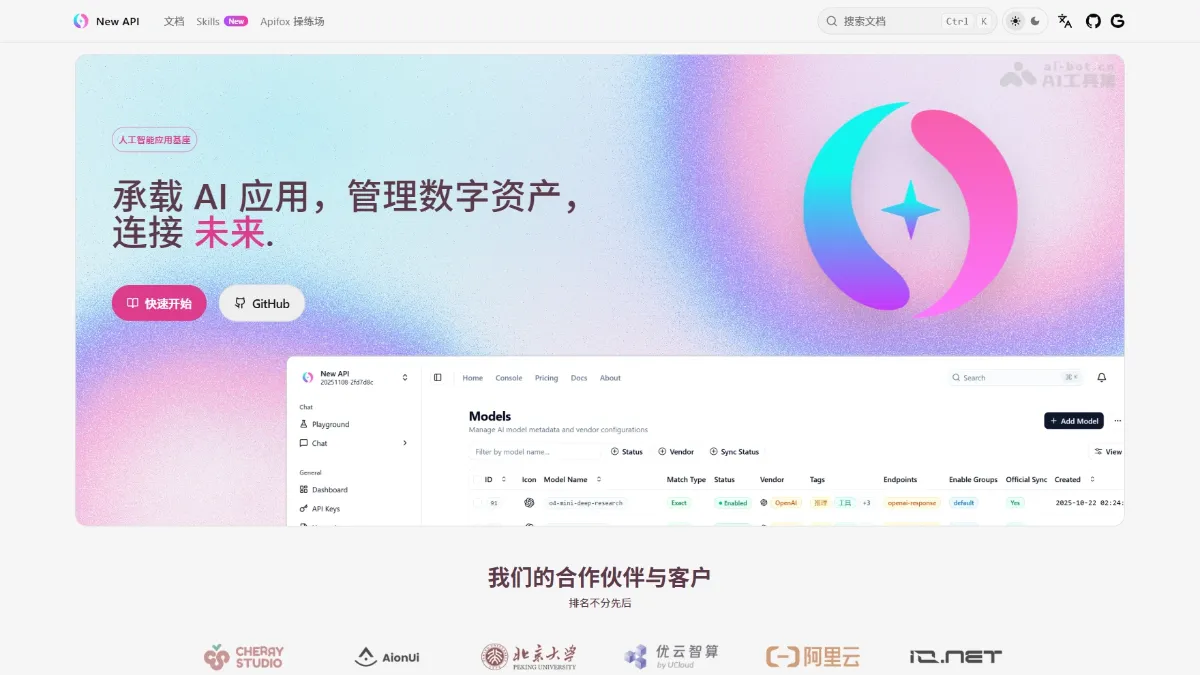

New API - Open Source AI Large Model Gateway and Asset Management System

New API is a next-generation AI gateway and asset management system. As an AI foundation platform, it provides unified infrastructure access to over 30 mainstream global AI services (OpenAI, Claude, Gemini, DeepSeek, etc.). Core platform features include a unified OpenAI-compatible interface, intelligent routing load balancing, granular billing and access control, and real-time data dashboards. The platform supports advanced functions such as multi-format conversion, inference intensity control, and cache-based billing. It adopts the AGPLv3 open-source license and supports Docker...

New API is a new generation of AI gateway and asset management system. As an AI base platform, it provides unified infrastructure access to 30+ mainstream AI services around the world (OpenAI, Claude, Gemini, DeepSeek, etc.). The core features of the platform include unified OpenAI-compatible interfaces, intelligent routing load balancing, fine-grained billing and permission control, and real-time data dashboards. The platform supports advanced functions such as multi-format conversion, inference strength control, and cache accounting. It adopts AGPLv3 open source protocol, supports one-click deployment of Docker, and adapts to individual developers to enterprise-level multi-tenant scenarios.

Main functions of New API

- Unified interface management : Provides a single API endpoint compatible with OpenAI format, seamlessly connecting to 30+ mainstream AI service providers around the world.

- Intelligent routing and scheduling : Support multi-channel load balancing, automatic failover and weighted random distribution to ensure high service availability.

- Fine billing system : Supports pay-per-time or pay-per-volume billing, prepaid recharge, multi-rate configuration and cache billing support.

- Security permission control : Provide token group management, model access restrictions, API call auditing and multi-platform authorized login.

- Format conversion capability : Supports mutual conversion between multiple API formats such as OpenAI, Claude Messages, Google Gemini, etc.

- Reasoning strength control : Supports flexible setting of high, medium and low levels of reasoning thinking intensity through the model name suffix.

- Real-time data dashboard : Provides data insight functions such as visual console, usage statistics analysis and cost monitoring.

Key information and usage requirements of New API

- Project positioning : New generation AI gateway and asset management system, AI base platform

- Open source agreement : GNU AGPLv3 (free to use, SaaS deployment requires open source)

- Compatibility basics : Developed based on One API, fully compatible with the original database

- Supported languages : Simplified Chinese, Traditional Chinese, English, French, Japanese

- Deployment method :Docker / Docker Compose / Pagoda Panel

- database :SQLite (default)/MySQL ≥ 5.7.8/PostgreSQL ≥ 9.6

- Docker image :calciumion/new-api:latest

Core advantages of New API

- unified access : Through an API endpoint compatible with the OpenAI format, you can seamlessly access 30+ mainstream AI service providers around the world, completely saying goodbye to the tedious work of multi-platform docking.

- Intelligent routing : The platform has built-in multi-channel load balancing, automatic failover and weighted random distribution mechanism to ensure the high availability and request stability of AI services.

- cost optimization : Supports cache billing, pay-per-volume or pay-per-view billing, and flexible multi-rate configuration to help users achieve refined cost control and expense management.

- Format interoperability : Provides free conversion capabilities between multiple API formats such as OpenAI, Claude Messages, Google Gemini, etc., significantly lowering the access threshold for different models.

- Ready out of the box : Supports one-click deployment of Docker, is fully compatible with the One API database, provides visual installation of the pagoda panel, and greatly simplifies the deployment process.

How to use New API

- Deployment and installation : Clone the project repository locally, edit the configuration file, execute the Docker command to start the service, and access the system via the default 3000 port through the browser.

- Initial configuration : Log in to the management background to set up an administrator account, add the API keys of each AI service provider in channel management, and configure weights and failover strategies.

- Create access credentials : Create an API Key on the token management page, set the quota limit, validity period and available model range, and allocate independent credentials for different scenarios to achieve permission isolation.

- Access and use : Point the application API base address to the New API deployment address, use the generated token to replace the original key, and maintain the OpenAI standard format to seamlessly call multi-platform models.

New API project address

- Project official website :https://www.newapi.ai/

- GitHub repository :https://github.com/QuantumNous/new-api

Comparison of similar competing products of New API

| Contrast Dimensions | New API | One API | LITELLM |

|---|---|---|---|

| Project positioning | AI gateway and asset management system, AI base platform | Open source AI interface aggregation and management platform | Multi-LLM routing and load balancing tools |

| development team | QuantumNous | Community open source projects | BerriAI Team |

| Open source agreement | GNU AGPLv3 | MIT | MIT |

| Core functions | Unified interface, intelligent routing, refined billing, format conversion, and permission control | Channel management, token distribution, quota control | Model routing, failover, observation and monitoring |

| Support model | 30+ mainstream service providers (OpenAI, Claude, Gemini, DeepSeek, Midjourney, Suno, etc.) | 20+ mainstream service providers | 100+ model providers |

| format conversion | OpenAI ↔ Claude, OpenAI → Gemini, Thinking content conversion | Mainly compatible with OpenAI format | Unified to OpenAI format output |

Application scenarios of New API

- Personal developer website building : Quickly build a private AI interface transfer station, manage the API keys of multiple platforms in a unified manner, control personal usage costs through precise billing, and avoid the cumbersome operation of frequently switching between different service providers.

- Entrepreneurial team product development : Provide stable multi-model backend support for AI applications to ensure high availability of products and services, while monitoring usage and costs through data dashboards to optimize resource allocation.

- Enterprise internal AI middle platform : The platform supports the construction of an enterprise-level AI asset management system, unified management and control of model access and expenses, to meet compliance requirements and improve management efficiency.

- AI model comparison test : Users can quickly switch between models from different manufacturers through a unified interface, compare the performance of GPT, Claude, Gemini, DeepSeek, etc. in actual tasks to assist technology selection decisions. ©